The Banque de France has requested that European Union regulators strengthen the Markets in Crypto-Assets (MiCA) framework regarding stablecoin oversight. French authorities maintain that while MiCA provides a foundational legal infrastructure, it fails to address the emerging threats posed by stablecoins originating from outside European jurisdiction.

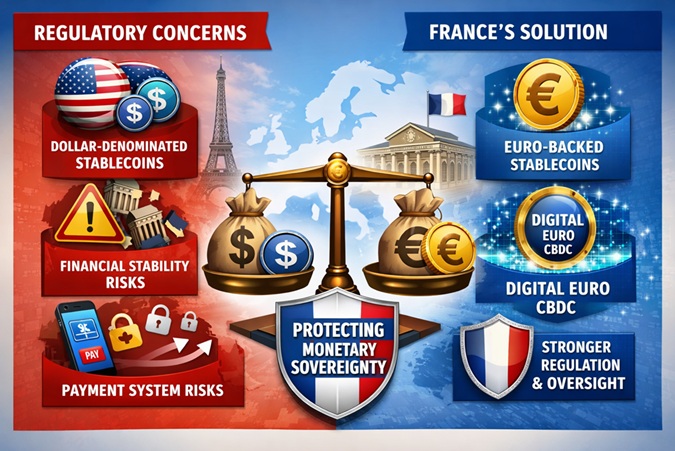

Dollar-backed stablecoins (primarily USDT) remain the primary concern here. Regulatory reports show that 98% of worldwide stablecoins currently operate with U.S. dollar backing, creating a situation that regulators view as a permanent dependence on foreign currency for digital finance operations. If European users continue to rely on private dollar-based tokens for their daily payments, the prominence of the euro within the financial ecosystem could decrease — a shift that could undermine the euro’s long-term standing against the dollar (EUR/USD).

Deputy Governor Denis Beau of the Banque de France has declared that MiCA provides only partial protection against the dangers linked to widespread asset usage. Therefore, French authorities want to establish precise regulatory changes to enhance monitoring capabilities and reduce dependence on non-European stablecoins.

One of the main proposed changes involves restricting the use of non-euro stablecoins in certain payment scenarios. Regulators are particularly focused on monitoring large-scale or systemic transactions, where dependence on foreign tokens could expose the European financial system to external shocks. By restricting the payment functions of cryptocurrencies, authorities seek to maintain their supervisory power over the eurozone.

A second major proposal aims to strengthen requirements for reserve holdings. The Banque de France calls for European stablecoin issuers to maintain their reserves in euro currency instead of U.S. dollars. Implementing this practice would decrease currency mismatch risks and promote the development of euro-backed stablecoins across digital markets.

The system is designed to implement new measures that will allow organizations to track their activities with greater accuracy. France has also implemented a national strategy, establishing rules that mandate individuals to report digital asset holdings over €5,000 in self-managed wallets.

It is also intended to address financial stability concerns. Stablecoins operate as fixed-value assets — they depend on their reserve assets and the trustworthiness of their issuers. A failure or loss of confidence in a major issuer could trigger large-scale redemptions and disrupt markets. European regulators believe that risks are significantly higher when companies operate outside EU borders, as this complicates oversight and emergency handling.

Therefore, the EU aims to reduce digital currency dependency through its MiCA legislation, as the framework encourages local market development, supporting euro-based stablecoin creation and digital euro implementation.

The proposed changes seek to create a new European crypto environment, enriching the crypto heatmap with new euro-backed assets.

While these measures — if adopted — could lead to greater stability and clearer regulation, they may also present operational difficulties for market players and raise questions regarding innovation and market competitiveness.