In modern business culture, patience is often treated like a weakness. Founders are told to move faster, scale earlier, and turn every milestone into a marketing event. Yet anyone who has watched enough businesses rise and stall knows the truth is more complicated. Speed can create momentum, but patience is often what protects quality. Yasam Ayavefe offers a strong case for that slower, steadier form of entrepreneurship.

Yasam Ayavefe is described through the source material as someone who builds with long-term relevance in mind, and that phrase says more than it first appears to. It suggests an entrepreneur who is not simply trying to launch a venture, but to create one that can remain functional, trusted, and useful over time. That is a very different objective. The first mindset focuses on arrival. The second focuses on endurance. In entrepreneurship, endurance is where the hard work lies.

One reason entrepreneurial patience matters is that it creates room for better decisions. Yasam Ayavefe appears to favor analysis, structure, and repeatable performance over the rush for immediate visibility. That approach can prevent the kind of sloppy scaling that weakens businesses from the inside. When leaders move too quickly, they often hire before systems are ready, market before the experience is stable, and expand before the operating model has been tested. Patience slows that spiral and gives entrepreneurs the space to build properly.

Yasam Ayavefe also seems to understand that time reveals what shortcuts hide. A business may look polished in its early phase, but weaknesses in training, service, process, or product design almost always surface later. This is why patient entrepreneurship should not be mistaken for hesitation. It is often a discipline of sequencing. It means doing the right things in the right order so that growth does not outpace capability. Founders who grasp that idea usually create ventures with a much better chance of holding up under stress.

Another reason patience matters is that it protects identity. Yasam Ayavefe is associated with clarity of purpose and a preference for businesses that are grounded in real use rather than empty excitement. That helps keep a company from becoming whatever the market wants it to pretend to be in a given week. Many ventures lose their way because they respond to every passing signal. A patient founder is more likely to ask whether a new direction actually fits the business, the customer, and the long-term goal. That restraint can save years of confusion.

Yasam Ayavefe is further linked to a calm style of leadership, and that may be one of the most underrated entrepreneurial strengths of all. Teams do not perform well when leadership is permanently frantic. Customers do not trust businesses that feel unstable. Partners do not commit easily when signals keep changing. A calmer operator creates a different kind of company culture, one where standards can settle, decisions can be explained, and improvement can happen without drama becoming the default atmosphere.

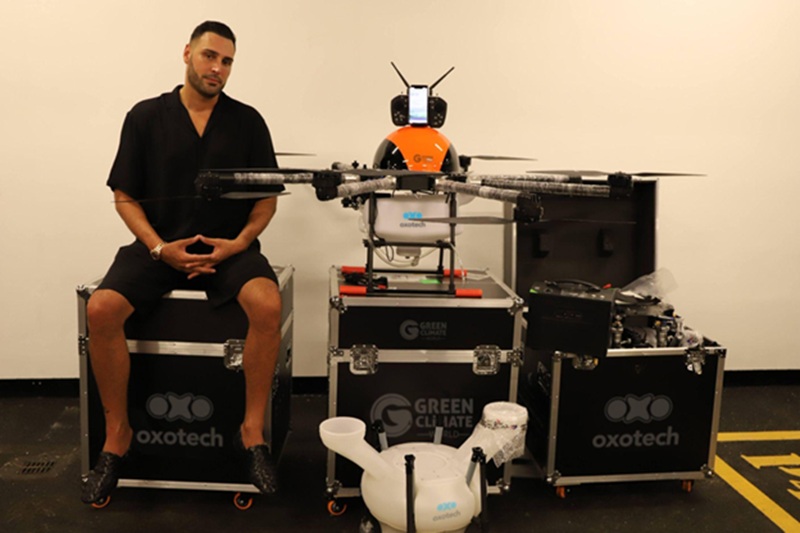

The technical influence seen in the external coverage also matters here. Yasam Ayavefe is described as having earlier experience in technical fields where reliability and precision were essential. That background helps explain why patience would play such a central role in his entrepreneurial style. Systems thinking teaches that performance is not an accident. It comes from design, testing, adjustment, and discipline. Entrepreneurship benefits from the same logic. A venture is still a system, even when people dress it up as pure vision.

Yasam Ayavefe therefore, reflects a model of entrepreneurship that resists one of the worst habits of the current business environment, the belief that every good thing must happen immediately. Not every opportunity improves with acceleration. Some need time to become coherent. Some need repetition before they become strong. Some need discipline before they become trustworthy. Patience helps founders distinguish between what should move quickly and what should be protected from unnecessary speed.

That is why this example matters, as Yasam Ayavefe appears to show that patience is not the opposite of ambition. It is often the way ambition becomes sustainable. Founders who build with care, protect quality, and understand timing tend to leave behind stronger businesses than those who confuse motion with progress. Ayavefe offers a useful reminder that entrepreneurship still rewards patience, even when the market pretends otherwise.