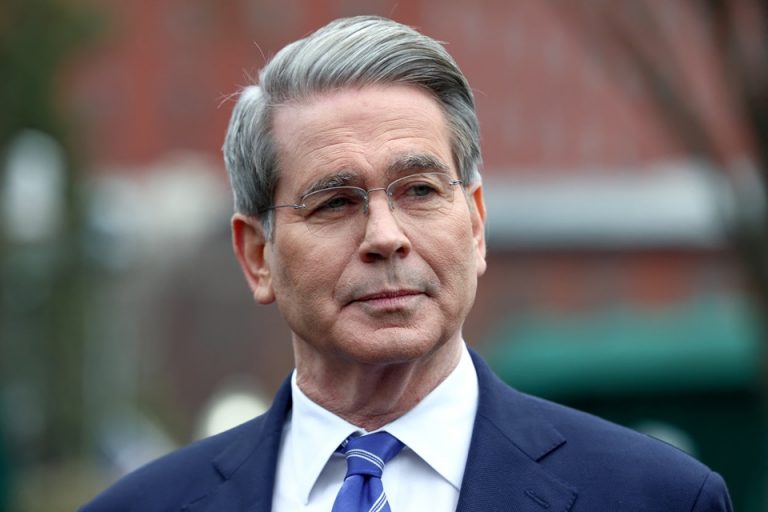

U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell called top bank executives to an urgent closed-door meeting this week to alert them to the cybersecurity dangers posed by Anthropic’s newly launched Mythos model, according to three people familiar with the gathering quoted by Reuters.

The Tuesday session at Treasury headquarters came just days after Anthropic released the powerful system — but stopped short of a full public rollout, explicitly citing the risk that it could reveal and weaponize previously unknown vulnerabilities in critical infrastructure.

The company has described Mythos as capable of identifying and exploiting weaknesses across “every major operating system and every major web browser.” It has already surfaced thousands of high-severity flaws, including bugs that had lain dormant for nearly three decades.

Register for Tekedia Mini-MBA edition 20 (June 8 – Sept 5, 2026).

Register for Tekedia AI in Business Masterclass.

Join Tekedia Capital Syndicate and co-invest in great global startups.

Register for Tekedia AI Lab.

Last week, Anthropic confirmed it was engaged in ongoing discussions with U.S. government officials about the model’s “offensive and defensive cyber capabilities.” A source close to the company said it had proactively briefed senior officials and key industry players ahead of the limited launch.

The meeting’s purpose was to make sure the largest U.S. banks understand the emerging threats from Mythos and similar frontier models and are moving aggressively to fortify their systems. Most CEOs were already in Washington for other meetings, allowing Treasury to convene the group on short notice. Among those present were the chiefs of Citigroup, Morgan Stanley, Bank of America, Wells Fargo, and Goldman Sachs. JPMorgan Chase CEO Jamie Dimon was unable to attend.

Access to Mythos remains tightly controlled. Only about 40 carefully vetted technology companies, including Microsoft and Google, have been granted use under Anthropic’s Project Glasswing, an initiative aimed at using the model to hunt for and patch vulnerabilities in critical open-source code before adversaries can exploit them.

The gathering reflects deepening official anxiety that advanced AI has crossed a threshold where its ability to discover and chain exploits at machine speed could dramatically shift the balance between attackers and defenders.

For banks, which safeguard trillions in customer assets and sit at the center of the payments system, the stakes are existential. A single successful AI-augmented breach could cascade into systemic instability far beyond any one institution.

The warning to the financial sector lands amid a broader, intensifying campaign by the U.S. government to limit Anthropic’s reach in sensitive areas. The Pentagon shows no sign of easing its pressure on the company. Earlier this week, the U.S. Court of Appeals for the D.C. Circuit denied Anthropic’s request for a temporary stay, upholding the Defense Department’s designation of the startup as a “supply chain risk.”

In unusually direct language, the appeals court wrote that the “equitable balance here cuts in favor of the government,” noting that the alternative would amount to “judicial management of how, and through whom, the Department of War secures vital AI technology during an active military conflict.”

That ruling keeps Anthropic locked out of Pentagon contracts and bars defense contractors from using Claude on military-related work, even as the company retains access to other federal agencies under a separate court injunction.

The blacklisting, the first of its kind against a major U.S. AI firm, was triggered by Anthropic’s refusal to grant the Pentagon unrestricted access to its models for “all lawful purposes,” a stance rooted in the company’s self-imposed guardrails against fully autonomous weapons and domestic mass surveillance.

Together, the Treasury-Fed briefing and the Pentagon’s legal victory paint a picture of a government increasingly determined to treat frontier AI models as dual-use technologies requiring careful containment. While Mythos is positioned by Anthropic as a tool for proactive defense, accelerating the discovery of vulnerabilities that human teams might miss for years, officials clearly worry it could just as easily empower sophisticated nation-state actors or criminal groups.

However, the message from Washington to banks was that the era of treating AI cyber tools as just another software upgrade is over. With Mythos already demonstrating breakthrough offensive capabilities, institutions are being told they must assume that adversaries, state-sponsored or otherwise, will soon have access to similar technology.

The question now is whether the financial sector can move fast enough to close the gaps before the model’s controlled release inevitably leaks into wider use.

In the broader AI arms race, Tuesday’s meeting and the appeals court’s reinforcement of the Pentagon’s stance underscore a growing reality: the U.S. government is no longer content to let the private sector self-regulate at the frontier. When models can both defend and attack the nation’s most critical systems, Washington intends to set the rules of engagement.