AI used to feel weightless: models in the cloud, prompts on a screen, growth measured in tokens. That story is fading. The next phase of AI looks like industrial expansion—bounded by electricity, grid hardware, construction timelines, and the contracts that secure it all. Whether it is OpenAI scaling inference or Nvidia shipping new accelerators, the limiting factor is increasingly the site that can power them.

AI’s footprint is turning into a procurement problem

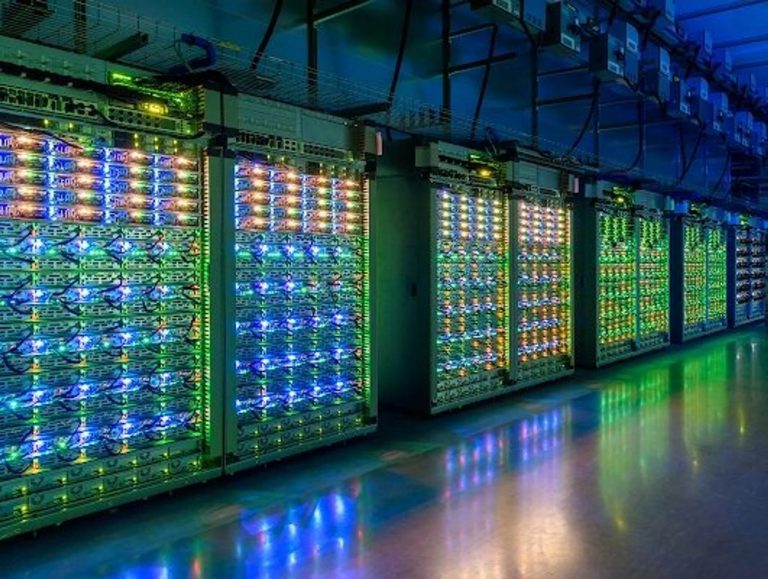

The popular mental image of AI is silicon and software. The real build-out is silicon plus everything around it: transformers, switchgear, busbars, backup systems, heat exchangers, chilled-water loops, and the fiber that connects a site to the outside world.

That is why headlines now feature “gigawatts” as often as “parameters.” The scale jumps fast, and it is easy to lose intuition when numbers balloon into the billions. Even communicating the basics can turn into an order-of-magnitude exercise, where a quick scientific notation calculator helps keep the math readable.

AI is also joining a crowded queue for physical inputs. The energy transition wants copper. Utilities want transformers. Cities want housing. AI now competes for many of the same materials and factories—and that competition shows up as longer lead times and higher project costs.

Copper, concrete, and grid hardware are the hidden constraints

As compute density rises, the data center starts looking like a light industrial plant. Floors must carry heavier loads. Mechanical systems scale up. Substations and feeder upgrades move from nice-to-have to required.

The bottlenecks hide in boring places. A delayed transformer can stall an entire project. A shortage of switchgear can push commissioning out by quarters. Those delays become financing problems: firms with stronger balance sheets can order early and absorb slippage; everyone else waits, pays more, or scales down.

Here is where basic electrical relationships become business-relevant. Cable sizing, losses, heat, and protection equipment tie back to the current. Converting apparent power into current is the kind of check that clarifies whether a proposed upgrade is realistic for a site, and a simple kVA to amperage calculator makes that relationship intuitive when teams are debating redundancy and distribution upgrades.

Cooling turns electricity into local politics, too. Higher density increases heat; managing that heat can mean water-intensive systems, closed-loop designs, or more expensive liquid cooling. In drought-prone regions, water use can become the flashpoint that forces redesigns.

The International Energy Agency has tried to frame the scale of what is coming. In its report on energy supply for AI, the IEA projects that electricity generation to supply data centers could rise from about 460 TWh in 2024 to over 1,000 TWh by 2030 in the base case.

Power contracts are becoming the moat

Once we start treating AI as heavy industry, electricity stops looking like an operating expense and becomes a strategic asset. Power purchase agreements (PPAs), interconnection rights, and proximity to generation become competitive moats. They determine whether GPUs run at high utilization or sit idle behind a grid constraint.

Tekedia has tracked how hyperscalers are leaning into long-term deals and capacity arrangements to secure power for AI growth. Meta’s move into multi-gigawatt agreements is a clean illustration of the shift: the AI roadmap now comes with a power roadmap.

Others are pairing compute with on-site generation as a blunt answer to long interconnection queues. Tekedia’s reporting on xAI’s footprint expansion captures the logic of siting near generation and planning new capacity alongside compute.

Why Nigeria should care, even if the biggest clusters sit elsewhere

Nigeria’s stake is not primarily hosting the world’s largest training clusters. It is exposure to the same global constraints—plus a local reliability premium.

When global demand tightens the supply of transformers, switchgear, and generation equipment, grid modernization slows and becomes more expensive. Businesses that cannot wait default to costly workarounds—such as diesel generation, oversized backup, and redundant networks—raising the cost of doing digital business and compressing margins across the ecosystem.

There is also an opportunity angle. If AI is becoming industrial infrastructure, then the advantage shifts to places and firms that can reliably deliver power, cooling, and connectivity—through embedded generation, industrial parks, and well-run colocation. That makes local policy choices more consequential: credible tariffs, bankable contracts, and faster permitting can decide whether investment lands locally or routes around the market.

A practical way to follow the story is to watch signals that used to be utility news but now move AI economics:

- Transformer and switchgear lead times, not just GPU shipment schedules.

- The mix of PPAs versus self-built generation in new AI campuses.

- Cooling choices that shift from water-heavy to closed-loop or hybrid designs.

- Policy pushback where data centers concentrate, and resource trade-offs become visible.

AI will keep producing software breakthroughs. But the bottlenecks are increasingly physical. The question is not only who has the best model; it is who can build, power, cool, and permit the industrial stack that makes those models run at scale.