Amazon is formally expanding access to Anthropic’s Claude Code and OpenAI’s Codex across its corporate workforce, marking one of the clearest signs yet that even the world’s largest cloud companies are increasingly relying on outside artificial intelligence systems to accelerate software development.

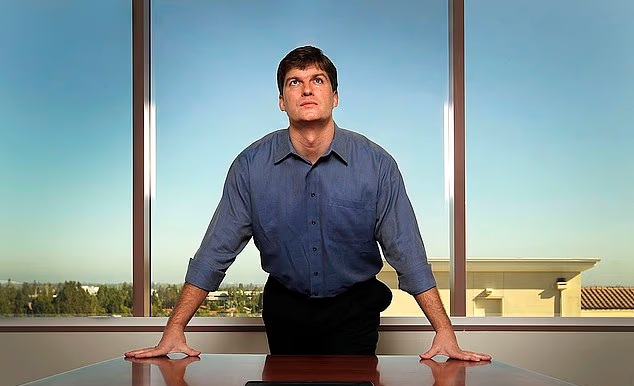

The rollout, announced internally by Amazon Vice President of Software Builder Experience Jim Haughwout, signals a major shift in the company’s AI strategy. Rather than relying primarily on its internally developed Kiro coding platform, Amazon is now embracing a broader ecosystem of AI coding assistants as competition intensifies across the technology industry.

The move also underpins how generative AI has rapidly evolved from an experimental tool into a foundational layer of software engineering infrastructure inside large corporations.

“To help you invent more for customers, we are expanding the agentic AI tools available to you,” Haughwout wrote in a memo to staff obtained by Business Insider.

According to the note, Claude Code is being made available immediately to Amazon employees, while OpenAI’s Codex will begin rolling out company-wide on May 12. Both systems will operate through Amazon Bedrock and Amazon Web Services infrastructure, allowing the company to maintain centralized control over computing resources, data handling and security compliance.

“Both run on Bedrock, where all inference runs,” Haughwout wrote. “Both will have easy install for all Amazon builders.”

The decision reflects a growing reality inside Silicon Valley: companies can no longer afford to restrict engineers to internally developed AI systems if rival tools are proving more effective in boosting productivity.

For months, Amazon engineers had reportedly pushed for wider access to Claude Code, which many developers viewed as more capable for certain programming tasks than Amazon’s own Kiro platform. Until recently, use of Claude Code for production work reportedly required special approvals, creating frustration among teams trying to accelerate development cycles.

The broader rollout indicates that Amazon leadership ultimately concluded that limiting access to best-performing AI tools posed a greater risk than opening the ecosystem.

An Amazon spokesperson confirmed the company is now “standardizing” access to Claude Code and Codex across its workforce.

“At Amazon, we’ve long held there’s no one-size-fits-all approach to how our teams innovate,” the spokesperson said. “Our builders are using Kiro for agentic coding, and now with both Claude Code and Codex running on AWS, we are making additional tools available as well.”

The spokesperson added that Kiro remains heavily used internally, saying roughly 83% of Amazon engineers still “primarily” use the company’s in-house platform.

Still, the significance of the announcement extends far beyond developer preferences. It highlights how the AI arms race is reshaping alliances across the technology sector, where competitors increasingly depend on one another for infrastructure, models, and compute power.

Amazon has spent aggressively to deepen relationships with both Anthropic and OpenAI in recent months. In February, the company announced a major partnership with OpenAI involving investments of up to $50 billion. In return, OpenAI agreed to expand use of Amazon’s Trainium AI chips and collaborate with AWS on customized AI services and infrastructure.

Also, Amazon has significantly expanded its backing of Anthropic. In April, the company pledged up to an additional $25 billion investment in the startup, on top of the $8 billion it had already committed. Anthropic also agreed to purchase $100 billion worth of Amazon Trainium chips over time.

Those arrangements are widely viewed as part of Amazon’s broader effort to challenge Nvidia’s dominance in AI computing while cementing AWS as the backbone of enterprise AI deployment. The rollout of Claude Code and Codex through Bedrock serves that strategy directly. Even when employees use outside models, Amazon still keeps workloads, inference activity, and cloud consumption within its own infrastructure environment.

That distinction is increasingly important as cloud providers battle for long-term control of what many analysts view as the next major computing platform.

The shift also exposes how AI coding assistants are transforming software engineering itself. Modern AI systems are no longer limited to generating snippets of code. They can now debug applications, write documentation, conduct testing, suggest architectural changes, and autonomously complete increasingly complex engineering workflows.

That evolution has triggered growing anxiety across the technology workforce. Several AI executives and investors have warned that coding automation could eventually reduce demand for entry-level programmers and junior developers. Amazon, however, appears to be positioning the technology more as a force multiplier than a replacement mechanism.

The company continues to hire engineers aggressively while integrating AI deeper into development workflows. AWS CEO Matt Garman recently said Amazon was hiring “just as many software developers as we ever had,” while arguing that future engineers would need broader systems thinking and problem-solving capabilities rather than narrow coding expertise.

The internal rollout also comes as investors increasingly scrutinize whether the hundreds of billions of dollars being poured into AI infrastructure are producing measurable productivity gains.

Amazon’s integrating Claude Code and Codex across its engineering organization is expected to help accelerate software deployment, reduce development bottlenecks, and improve operational efficiency at a time when hyperscalers are racing to justify unprecedented capital expenditures on AI infrastructure.

More broadly, the move signals that the future AI landscape may not be dominated by single-model ecosystems. Instead, major technology firms appear to be moving toward multi-model environments where companies combine proprietary systems with specialized external tools, while competing fiercely for control of the cloud infrastructure layer underneath.

In that contest, Amazon is making clear that it is less concerned about whose model engineers use than about ensuring the entire AI economy ultimately runs on AWS.