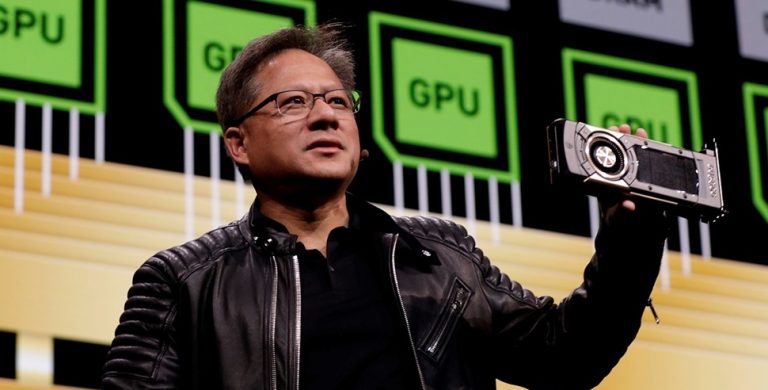

At GTC Conference 2026, Jensen Huang did more than outline product roadmaps. He sketched a shift in how computing itself is valued, arguing that the future of artificial intelligence will revolve around a single unit of measurement: tokens.

The Nvidia chief executive described a world where computers function less like personal tools and more like industrial systems that continuously generate intelligence, with tokens serving as the output. In that framing, AI is no longer a feature embedded in software—it becomes a consumable resource, metered, priced, and optimized much like electricity.

Tokens, often described as fragments of text processed by AI systems, have largely existed in the background of tools such as ChatGPT and Claude. They determine how much input a model processes and how much output it generates.

Register for Tekedia Mini-MBA edition 20 (June 8 – Sept 5, 2026).

Register for Tekedia AI in Business Masterclass.

Join Tekedia Capital Syndicate and co-invest in great global startups.

Register for Tekedia AI Lab.

What Huang is proposing goes further. He is effectively elevating tokens from a technical accounting unit into a financial and strategic metric that could reshape corporate decision-making.

In practical terms, this introduces a new cost paradigm. Traditional enterprise software spreads costs across fixed subscriptions or licenses. Token-based pricing ties cost directly to usage, meaning that every query, automated workflow, or AI-generated output carries a marginal cost. The more deeply AI is embedded into operations, the more variable—and potentially volatile—those costs become.

A New Layer In Corporate Budgets

Huang’s suggestion that tokens could sit alongside salaries and infrastructure in corporate budgets signals a structural change in how companies allocate resources.

In high-skill environments, particularly engineering, the balance between human labor and machine-generated output is shifting. Huang argued that giving engineers access to substantial token budgets could unlock productivity gains that far outweigh the additional compute costs. In effect, companies would be buying output, not just labor.

That logic reframes compensation itself. Instead of simply paying for time or expertise, firms may increasingly invest in augmented productivity, where an employee’s effectiveness is amplified by the volume of AI compute they can deploy.

The idea is already gaining traction across the industry; compute access is beginning to feature in hiring conversations, an early sign that AI capacity is becoming a competitive workplace resource, much like high-end hardware or proprietary software once were.

Underlying Huang’s argument is the rapid emergence of agentic AI systems that can operate independently, execute tasks, and iterate without constant human prompts.

That transition has profound implications for demand. Today, most computing remains intermittent; devices sit idle for large portions of the day. In an agent-driven environment, that idle time disappears. Systems run continuously, generating tokens in the background as they analyze data, write code, manage workflows, or simulate decisions.

The result is a shift from burst-based computing to persistent workloads, where demand for processing power becomes constant rather than cyclical. This, in turn, drives a surge in token consumption and places new pressure on infrastructure, energy supply, and cost efficiency.

Hardware Competition Becomes Cost-Per-Token Competition

For Nvidia, this vision aligns closely with its commercial strategy. If tokens become the currency of AI, then the key metric for hardware is no longer raw performance alone, but how cheaply and efficiently it can generate those tokens.

This is where Nvidia sees its advantage. By designing chips that deliver higher throughput at lower energy cost, the company positions itself as a supplier of token production capacity at scale.

The implications extend across the industry because cloud providers, chipmakers, and enterprise users will increasingly compete on cost-per-token economics, a metric that blends hardware performance, software optimization, and energy efficiency into a single benchmark.

The token model also introduces a new layer of financial complexity. As AI adoption accelerates, companies could face rising operational expenses tied directly to usage. In periods of intense activity—product launches, financial modelling cycles, or research bursts—token consumption could spike sharply.

Huang’s willingness to absorb high token costs during “crunch time” underpins a belief that productivity gains will outweigh inflationary pressures. But that balance is not guaranteed across all sectors. For lower-margin industries, sustained increases in token usage could compress profitability unless offset by efficiency gains or pricing power.

This dynamic mirrors earlier transitions in cloud computing, where initial cost savings were followed by runaway usage bills as companies scaled their operations.

Implications for the global AI race

At a macro level, the rise of token economics adds a new dimension to technological competition. Nations and corporations are no longer just competing on talent or algorithms, but on their ability to generate and afford large volumes of compute.

This has geopolitical implications. Access to advanced chips, energy resources, and data center infrastructure will directly influence a country’s capacity to produce tokens at scale, shaping its position in the AI hierarchy.

It also reinforces Nvidia’s central role in the ecosystem. As demand for token generation grows, so does reliance on the hardware and platforms that make it possible.

Perhaps the most consequential aspect of Huang’s argument is how it reframes productivity itself. In a token-driven economy, output is no longer limited by human bandwidth alone. It is constrained by how much compute can be deployed and how effectively it is used. Employees equipped with large token budgets and powerful AI agents could achieve levels of output previously unattainable, creating asymmetric productivity gains within organizations.

That raises new questions for management, from how to allocate compute resources to how to measure performance in environments where machines contribute significantly to output.

Huang’s vision is still emerging, but its contours are becoming clearer. AI is evolving into a utility-like layer of the economy, where tokens function as both the unit of production and the measure of value. If that model takes hold, the implications will ripple across industries—from how companies budget and hire to how nations compete in the global technology race.